The variety of cognitive biases

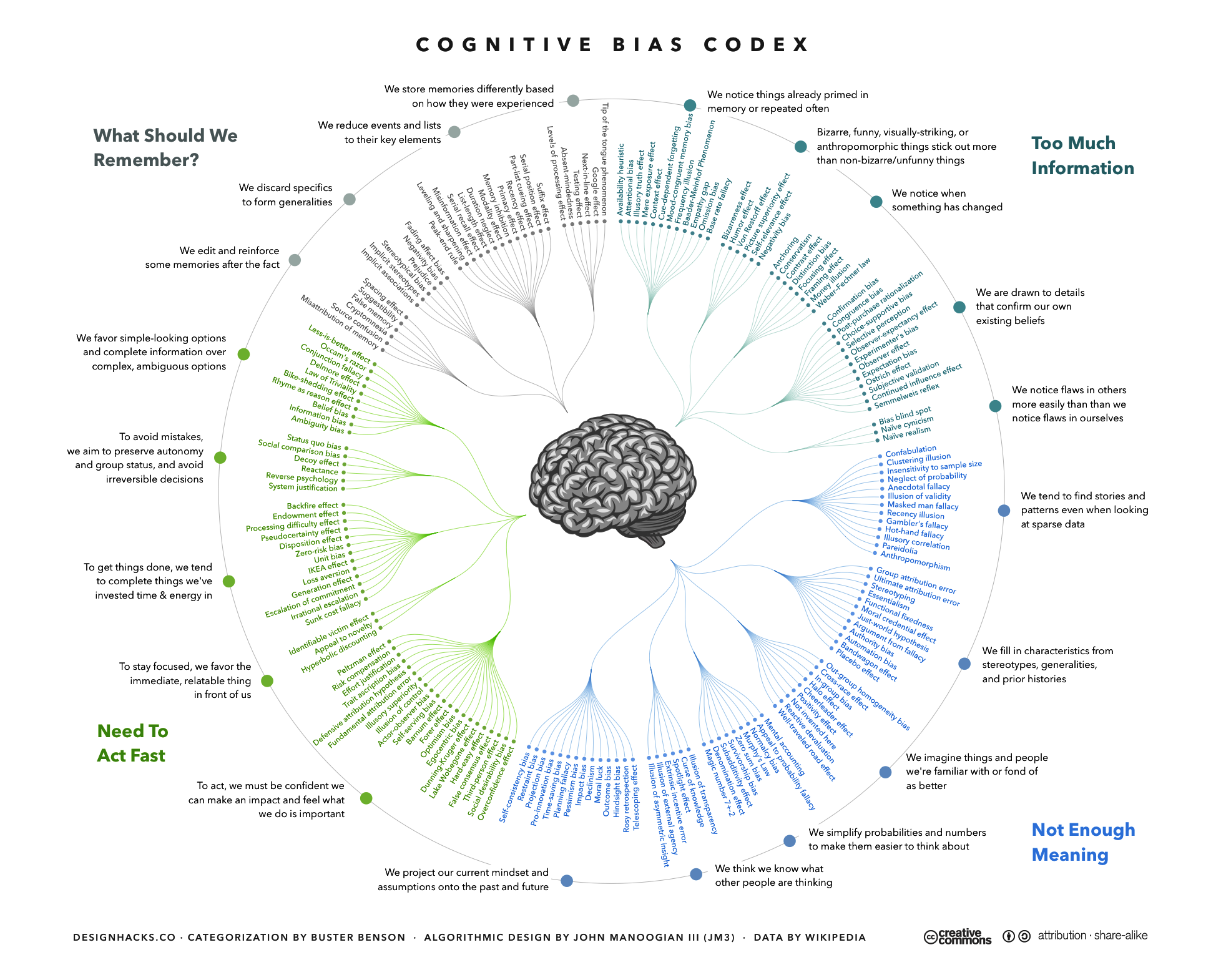

You may have seen this infographic below. It’s hard to get much of out of this codex due to the huge amount of information it’s trying to convey, but one thing seems clear – there are a lot of identified biases affecting our everyday reasoning and judgment - and that psychologists and behavioral scientists are good at naming things creatively.

The Codex of Cognitive Biases – yes there are a lot of them.

What do we mean by the term cognitive bias?

A cognitive bias refers to a systematic error in thinking, where judgment or behavior deviates from rationality or what would be considered desirable according to accepted norms.

Some biases are related to memory and can distort the types of information and events that we recall. Some examples of biases related to memory are rosy retrospection or remembering the past as better than it was or remembering things better that are congruent with our current mood, referred to as the mood-congruent memory bias or state-dependent memory. Other biases affect how we process information or what information we pay attention to, such as the ostrich effect or the tendency to ignore negative information or situations.

Some biases are classified as “cold”, such as the availability heuristic, where we assume that events are more likely based on how vividly we can imagine or remember them, or base-rate neglect, which is the tendency to ignore general information about the prevalence of something in favor of specific information related to an individual case. These biases do not reflect a person’s own self-interest and are instead related to errors in information processing. Other cognitive biases are more motivated by self-interest such as the overconfidence effect.

Examples of cognitive biases in everyday life

To understand better how these biases can play out in everyday life, let’s go through some examples:

Example #1

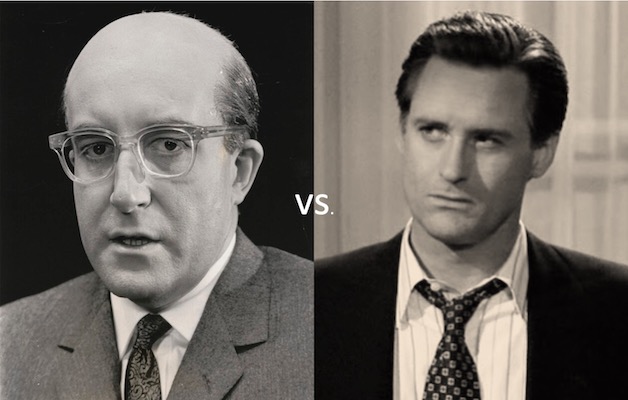

Who would you rather have in office?

Of course, this decision is difficult to make because you know very little about either candidate other than how they look. Nonetheless, numerous times voters have shown preferences for political candidates who are physically attractive[1]. This tendency for a positive impression of someone in one area to positively influence one’s opinion regarding their attributes in other areas is called the halo effect.

Bonus question - who are these two “candidates”? (answer at end)

Example #2

Let’s try another one: Sarah is a retired nurse. She is walking her dog down the street and carrying a bag containing a pint of cherry ice cream. Which of the following is more likely?

a. Sarah loves dogs.

b. Sarah loves dogs, and she loves cherry ice cream.

Because you were given information about Sarah that included her having a dog and having a pint of cherry ice cream, you may have wanted to pick option b. You would be in the majority, as 85% of people chose this option. We want to believe that the information that is more representative of what we were expecting about Sarah is more likely to be true. However, the probability that a person only loves dogs or only loves cherry ice cream is considerably higher than the probability that they love both dogs AND cherry ice cream. This tendency to assume that specific conditions are more probable than a single general one is known as the conjunction fallacy[2].

There are other biases that affect how we estimate probabilities, including overestimating the likelihood of long shot events such as winning the lottery or being attacked by a shark.

Example #3

Now consider this thought experiment. Imagine you surveyed a large group of newlywed couples and asked them to estimate their likelihood of getting divorced. You let them know that about 40% of marriages end in divorce. Even though you have given them the information regarding their chances, where do you think the average estimate of the group will land?

You probably guessed that the average estimate would be well below the actual rate. In fact, newlyweds estimate their own chances of divorce at zero, despite the widely known statistics about divorce. Similarly, people overestimate the chances of positive life events occurring such as owning a home or living into old age[3]. This tendency to overweight the likelihood of experiencing positive events and underweight the likelihood of experiencing negative events is known as optimism bias.

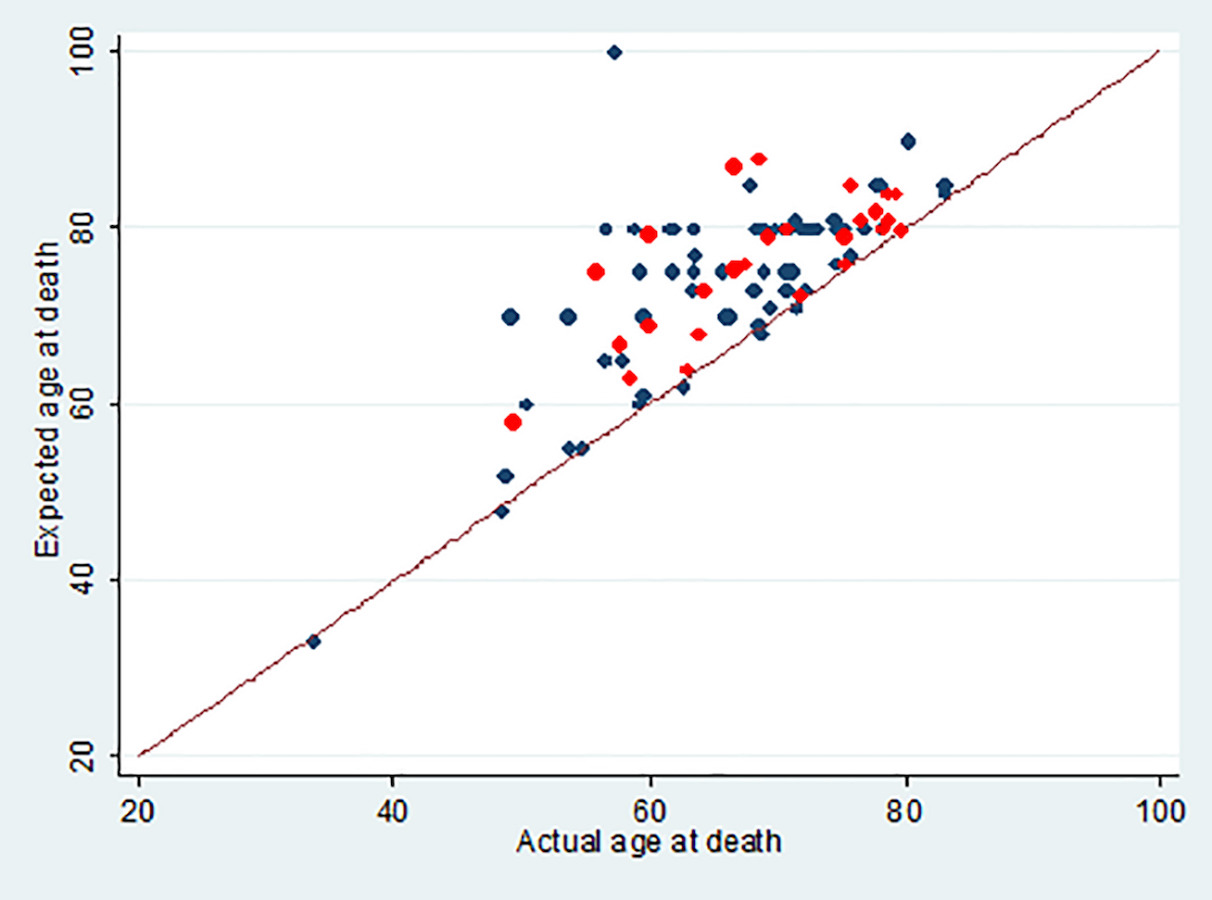

Optimism bias can affect our ability to prepare for future events such as end-of-life care. A recent study demonstrated optimism bias in health care by comparing how long advanced cancer patients expected to life relative to their actual life expectancy. Not a single patient underestimated their life expectancy[4].

A recent study shows how optimism bias can affect estimates of life expectancy in advanced cancer patients. No patient underestimated their own life expectancy.

Explanations for cognitive biases

Explanations for cognitive biases often relate to the application of heuristics or mental shortcuts. We receive a lot of information each day which can be more than we can physically process. As our brain power is subject to limited resources, these shortcuts allow us to simplify information processing. Biases can serve as rules of thumb that can help us make sense of the world more quickly, and a dominant theory suggests that biased decisions arise from fast, intuitive processes that can be corrected by slower, more effortful consideration[5] [6]. However, recent evidence suggests that biases may arise through gradual processes of incorporating information[7]. Emotions, individual motivations, social pressures, decreases in cognitive flexibility, and other limits on information processing can contribute to biases.

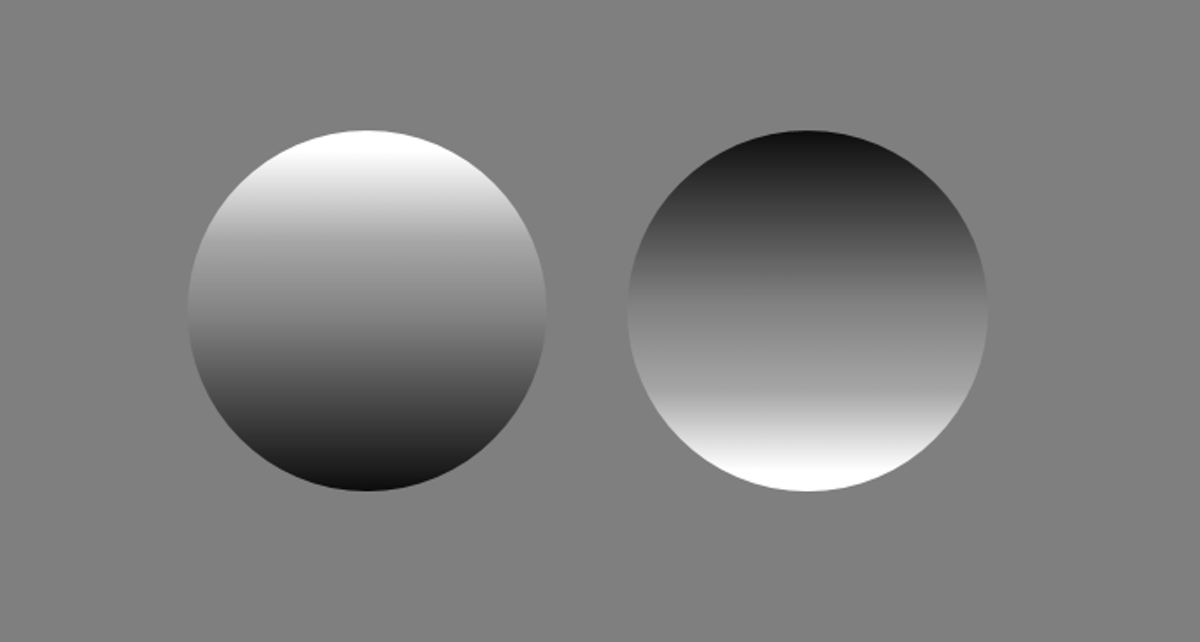

One idea, called efficient coding, suggests that such capacity limitations biased brain evolution towards information processing strategies that are maximally efficient[8]. To see an example of this, take a look at the circles below:

The circle on the left appears convex and the right concave.

The circle on the left is usually perceived as convex (protruding outwards) while the circle on the right is usually perceived as concave (sunken inwards). If there was an overhead light source, the patterns of light and dark on the left circle would be those that are produced on a convex object, whereas a concave object would be darker at the top because the upward-facing portions of the object catch the light, and the downward-facing portions are obscured as in the right circle. Our assumptions about these circles aren’t surprising since we evolved in a world with an overhead light source – the sun. Similarly, our accuracy is better for perceiving line orientations that we encounter more often in the world, such as horizontal lines[9]. The need to process the world efficiently may analogously account for cognitive biases[10].

How can we mitigate cognitive biases?

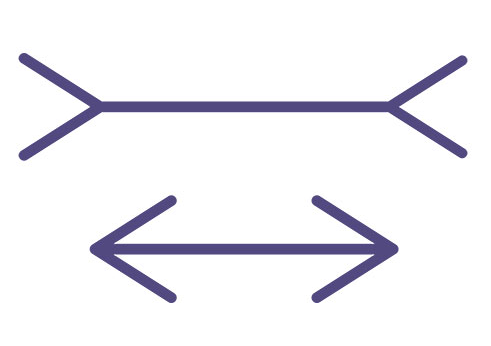

Take a look at the image below. Which line is longer? Even though both line segments are equal, and we can measure this as well as understand the reasons for this optical illusion, we still tend to perceive the upper line as longer. Similarly, we may not be able to change our biases in processing the world in some cases.

The Müller-Lyer optical illusion. Both line segments are the same length, but we perceive the upper one as longer.

Instead, we can focus on strategies to mitigate them. Awareness of biases is an important step. Researchers have found that training participants to recognize biases in their own decision-making reduced the effects of these biases by 29%[11]. Of course, we may not recognize these biases as often in the real world compared to an artificial lab setting, but a video game that trains players to recognize cognitive bias through lifelike experiences has shown promising results[12]. Awareness of cognitive biases can also help us more often consider factors that influence our decisions and challenge our own thinking.

Awareness of biases also allows us to build procedures that prevent us from acting on biased thinking. For example, simple checklists can help surgeons make lifesaving decisions more effectively[13]. Giving short-term financial incentives or using pre-commitment strategies that bind our future selves to certain behaviors can be effective ways of addressing the fact that we distort present compared to future outcomes (present bias)[14].

Get in touch

Find out more about how Collabree’s solution addresses cognitive biases by contacting us here:

Dr. Anjali Raja Beharelle

CSO – Collabree AG

[email protected]

Pascal Kurz

CEO – Collabree AG

[email protected]

Answer to bonus question: Peter Sellers as President Merkin Muffley in Dr. Strangelove and Bill Pullman as President Thomas Whitmore in Independence Day.

Literature

[1]: White, Andrew Edward and Kenrick, Douglas, T. “Why Attractive Candidates Win”. The New York Times, Nov. 1, 2013, https://www.nytimes.com/2013/11/03/opinion/sunday/health-beauty-and-the-ballot.html [2]: Tversky, A. and Kahneman, D. Extension versus intuitive reasoning: The conjunction fallacy in probability judgment. Psychological Review. 90 (4): 293–315, (1983). doi:10.1037/0033-295X.90.4.293. [3]: Weinstein ND. Unrealistic optimism about future life events. J Pers Soc Psychol., 39(5):806-820. (1980). doi:10.1037/0022-3514.39.5.806 [4]: Finkelstein, E.A., Baid, D., Cheung, Y.B., Schweitzer, M.E., Malhotra, C., Volpp, K., Kanesvaran, R., Lee, L.H., Dent, R.A., Ng Chau Hsien, M., Bin Harunal Rashid, M.F. and Somasundaram, N. Hope, bias and survival expectations of advanced cancer patients: A cross-sectional study. Psycho-Oncology, 30: 780-788 (2021). https://doi.org/10.1002/pon.5675 [5]: Kahneman, D. Maps of bounded rationality: psychology for behavioral economics. Am. Econ. Rev. 93, 1449–1475 (2003) [6]: Fiske, S. T. & Taylor, S. E. Social Cognition: From Brains to Culture (Sage, 2013). [7]: Eldar E, Felso V, Cohen JD, Niv Y. A pupillary index of susceptibility to decision biases. Nat Hum Behav. 5(5):653-662. (2021) doi: 10.1038/s41562-020-01006-3. Epub 2021 Jan 4. PMID: 33398147. [8]: Barlow, H. B. in Sensory Communication (ed. Rosenblith, W. A.) 217–234 (MIT Press, Boston, 1961). [9]: Girshick, Ahna & Landy, Michael & Simoncelli, Eero. Cardinal rules: Visual orientation perception reflects knowledge of environmental statistics. Nature neuroscience. 14. (2011).926-32. 10.1038/nn.2831. [10]: Prat-Carrabin, A. and Woodford, M. Efficient coding of numbers explains decision bias and noise. bioRxiv (2020).02.18.942938; doi: https://doi.org/10.1101/2020.02.18.942938 [11]: Sellier AL, Scopelliti I, Morewedge CK. Debiasing training improves decision making in the field. Psychol Sci. 30(9):1371-1379 (2019). doi:10.1177/0956797619861429 [12]: Yagoda,B. “The Cognitive Biases Tricking your Brain”. The Atlantic, Sept., 2018, https://www.theatlantic.com/magazine/archive/2018/09/cognitive-bias/565775/ [13]: Gawande, A. The checklist manifesto: How to get things right . (2010). New York: Metropolitan Books. [14]: Loewenstein, G., Price, J. & Volpp, K. Habit formation in children: Evidence from incentives fo healthy eating. J. Health Econ. 45, 47–54 (2016).